Artificial intelligence is becoming part of everyday working life – often faster than companies can react. An AI policy provides the framework for the secure, compliant and efficient use of AI systems. However, many AI policies fall short because they address the wrong problem. This article outlines the key components.

- Manufacturers who use AI in their regulatory processes should define an AI policy.

- They should not confuse data protection with IP protection. In most cases, it is the latter that is at stake.

- The AI policy should comprise seven key elements.

The Big Misunderstanding: It’s Not About Data Protection

If you ask companies what their biggest concerns are regarding the use of AI, the answer is usually: data protection. Yet in most use cases, it is not at all about the protection of personal data as defined by the GDPR.

It is about IP protection – the protection of your crown jewels: development documents, design data, trade secrets. The risk is real: confidential information fed into an AI system could be used for training – and end up with competitors via indirect channels.

An effective AI policy must therefore focus on IP protection, not data protection.

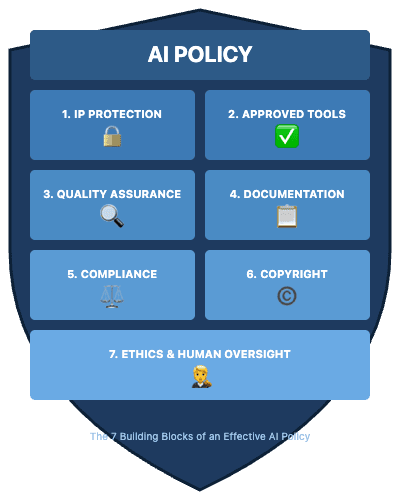

The 7 Building Blocks Of An Effective AI Policy

1. IP Protection

- Only input confidential information into AI systems that are contractually prohibited from training on customer data

- Alternative: Self-hosted models under your own control

- Clearly define which information is considered confidential

2. Approved Tools

- Maintain a whitelist of approved AI tools

- Prohibit the use of unauthorised tools (“shadow AI”)

- Define an approval process for new tools

Most manufacturers already maintain a list of IT systems, tools and/or measuring equipment to which AI tools can be added.

3. Quality Assurance

- Verify AI-generated content before use

- Take particular care with regulatory documents

- Responsibility remains with humans – hallucinations do not exempt you from the duty to verify

If AI tools are used as part of quality management processes (which is almost always the case), they are considered computerised systems and must be validated.

Read more about Computerised Systems Validation here.

4. Transparency And Documentation

- Establish guidelines for labelling AI-generated content

- Document the prompts and models used for relevant results

- Ensure traceability for audits

Prompts and structured data are the new templates. As such, they are subject to the same document control requirements.

5. Compliance

- Assess AI systems in use by risk category

- For high-risk applications (as defined by the AI Act): comply with enhanced requirements

- Implement mandatory training (AI literacy) for staff

Most AI tools do not qualify as high-risk products under the AI Act. However, the requirements of the MDR and ISO 13485 still apply. These include requirements for

- the definition and demonstration of competencies (even on a per-development-project basis)

- computerised systems validation

6. Copyright

- Clarify usage rights for AI-generated outputs

- Be aware of the risk of copyright infringements

- Where appropriate, treat AI output as a draft, not as a finished work

7. Ethics And Human Oversight

- No automated decisions without human review (in an HR context, note that otherwise high-risk systems are being used)

- Raise awareness of bias in AI results

- Principle: AI supports – humans decide

Conclusion: Prioritise AI Policy Correctly

An AI policy is not a bureaucratic monster, but a protective shield – particularly for your intellectual property. Starting with data protection is the wrong approach. The core of an effective AI policy is IP protection, flanked by clear rules on quality, transparency and compliance.

The good news: with the seven building blocks above, you have a solid foundation. Your AI policy doesn’t have to be perfect – but it must exist.

The Johner Institute supports medical device manufacturers and IVD manufacturers in developing regulatory requirements – including those relating to AI. Companies that use AI in medical devices are subject to additional requirements that go beyond a general AI policy.